There is something deeply satisfying about a system that simply answers immediately.

You click a button, something happens.

You ask for data, it gives data.

Cause and effect.

Modern software architecture increasingly treats this as naive.

Instead, systems are often built around queues from the beginning. Every action becomes an event, every event enters a broker, every broker feeds workers, every worker updates projections, invalidates caches, emits more events, and somewhere in the distance a user eventually sees the result.

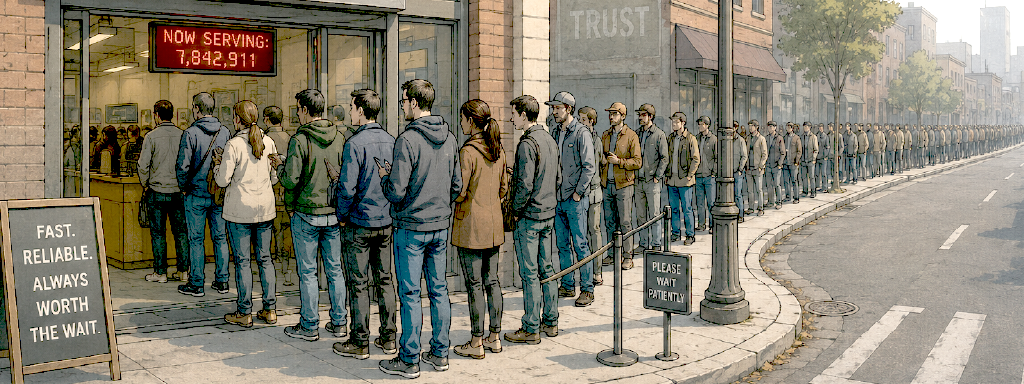

Nobody Likes Queues

And yet if you look at the physical world, queues are rarely considered signs of efficiency. Queues are understood instinctively by humans as visible evidence that capacity and demand are mismatched. Even the word queue wastes four vowels for nothing.

A restaurant with no queue feels efficient.

A highway with no traffic feels efficient.

An airport with no lines feels miraculous.

Queues only appear when something can’t keep up.

This sounds obvious in real life, but in software we somehow convinced ourselves that queues are architecture.

They are not architecture.

Queues Can Be Useful, And Your Worst Enemy

Queues aren’t inherently bad; real systems experience bursts, workloads do fluctuate. Some operations genuinely belong in the background:

- video encoding

- email delivery

- in-depth data analysis

- anything to do with LLMs

These are naturally asynchronous tasks. You wouldn’t expect a webpage to freeze while a 4K video transcodes. Queues are often the correct tool for absorbing temporary workloads.

The problem begins when the queue stops being a small tactical component and starts dictating the shape of the entire infrastructure.

And software behaves very differently depending on whether it is designed around:

- answering questions

- transporting queued work

The difference is subtle at first.

Suppose a user opens a dashboard. One approach is straightforward: query the current state directly, compute the result and return it.

The other approach is increasingly common: Any state mutation is queued, derived views are precomputed, synchronized into a distributed cache, projected, replayed, returned, stamped, lost, found again, signed in triplicate, indexed, lost again, and finally spit out to an asynchronous listener method that may or may not yield the result to the user.

Instead of paying the computational cost when the user asks the question, the system pays continuously forever in preparation for hypothetical future questions. This is one of the strangest tradeoffs in modern software engineering: we increasingly spend enormous infrastructure resources avoiding direct queries.

Queries have become unfashionable because they expose latency honestly. Sometimes exposing latency is the only way to avoid a disaster.

If a query is expensive, the user waits. The problem is visible immediately.

When everything is precomputed, the latency still exists, it has simply been converted into operational complexity. This is why queue-heavy systems often feel deceptively scalable early on. The frontend is perceived as “fast” because it stopped doing synchronous work. But the work never disappeared. It merely became detached from the interaction that caused it.

Queues As a Shortcut To Disaster

Detached processes make problems obscure as they’re removed from the interaction. And detached work accumulates.

This is true mathematically as much as architecturally. A queue only grows when incoming work exceeds processing capacity. Sustained queues are not neutral structures. They are evidence that the system is operating beyond equilibrium.

Healthy queues are usually near-empty.

You can observe this psychologically in the real world too.

A short queue feels normal.

A long queue feels ominous.

Software engineers should follow the same instinct.

If dashboards need to be built to display queue depth, lag, backlog, delayed processing or retry accumulation, queues are no longer temporary buffers; they have become part of the core model. And this has consequences.

Entire categories of complexity appear:

- guarantees (or promises)

- deduplication with no clear semantic connection

- synchronization race conditions

A direct function call quietly evolves into an ecosystem.

Ironically, many of the alternatives are simpler, cheaper, and faster. Direct querying is one.

Computers are fast. They were fast 30 years ago, they’re even faster today. Modern databases are extraordinarily capable. A well-designed query against properly indexed data is often dramatically more efficient than continuously precomputing possible future state transitions through asynchronous pipelines or feeding the query through multiple hands.

Especially when users may never ask the question in the first place.

Another alternative is reducing unnecessary work entirely. A surprising amount of queued traffic is meaningless churn just to accommodate the queueing itself.

Many systems improve more from deleting work than parallelizing it.

There is also backpressure, one of the healthiest ideas in systems design.

Backpressure is honesty. Instead of accepting infinite work into an ever-growing queue, the system pushes back:

S L O W. D O W N.

The real world does this naturally. Elevators do not accept infinite passengers. Roads eventually gridlock. Restaurants stop seating customers.

Queues appear temporarily, but healthy systems ultimately regulate inflow instead of pretending capacity is infinite. Studies have shown that adding lanes to highways makes problems worse, and that consistently heavy traffic patterns solve themselves over time as part of people start using alternate routes.

Infinite buffering is not scalability, it is delayed failure.

But What About Scaling?

Avoiding queue-centric architecture does not mean avoiding scale.

Some of the highest-performing systems ever built rely heavily on synchronous execution, direct memory access and intelligent caching, all which are queue-adjacent but not really queueing. One coherent machine answering direct queries can often outperform dozens of distributed services asynchronously negotiating reality through brokers.

And in a system not dependent on queues gets slower, the pain point is clearly visible and resolvable. Automatic cloud scaling services are built for this. They can respond very quickly. Having a few users experience slightly slower performance for a few minutes – or even few hours – is not the end of the world, if you avoided running head first into a system that is a magnitude harder to maintain, debug and analyze.

Summary

Queues are useful. Sometimes indispensable. Bursty workloads are real. Temporary buffering is real. Distributed systems are real.

But queues should remain servants of the architecture, not its organizing principle.

The ideal interaction model is still surprisingly simple: a user asks for something, and the system provides an answer.

Leave a Reply